created an LLM which captures group conversations as real-time images and diagrams

Timeline

2 weeks

Role

Full Stack Engineer Product Designer Hardware Engineer Industrial Designer

Budget

$300

Team

Failenn Aselta

Tools

Figma · Cursor · Gemini Raspberry Pi · React FastAPI · Linux

Project Overview

Design a handheld device that generates real-time visuals of conversation.

Buddy resolves the disconnect of group work by acting as an intermediary that captures conversations in real time through LLM-powered image generation. Built with rapid prototyping, electronics, and full-stack software development, it preserves discussions as a visual history and saves valuable concepts from being lost to misarticulation.

The Problems

Our Goals

.png)

%201.png)

Diagrams made with Napkin.ai and Figma

The challenge

One of the largest bottlenecks in design is miscommunication, what if we could create a tool to rectify this issue?

The Research

7.5 hrs per week lost to miscommunication in knowledge work.

Participant

Adam

31 Years Old

Product Designer

Portrait generated with Gemini

Frustrations

- Hard to regain alignment when spoken ideas are interpreted differently by each teammate.

- Little visibility into what was actually agreed on once a working session ends.

- Good work still feels like it stalls when concepts are lost to misarticulation or memory.

How Might We

Improve group communication by clarifying ideas visually through real-time LLM image generation?

By implementing a technology that helps clarify ideas visually through LLM image generation.

Ideation

Low-Fi Wireframes

Early Drawings

01

Familiar Layout

Zero learning curve.

02

Visual Output

Pictures over text.

03

On-device Audio

Nothing leaves the room.

04

Passive Form

Ambient, never the focus.

Iteration 1

Selected for constructionDial for changing through iterations, button for on/off on top, and version with an on/off and commit button on the front.

Iteration 3

Send button and an on/off on the front.

Iteration 4

Basic set up with visual as main portion.

Hand-drawn sketches

Full Figma Board

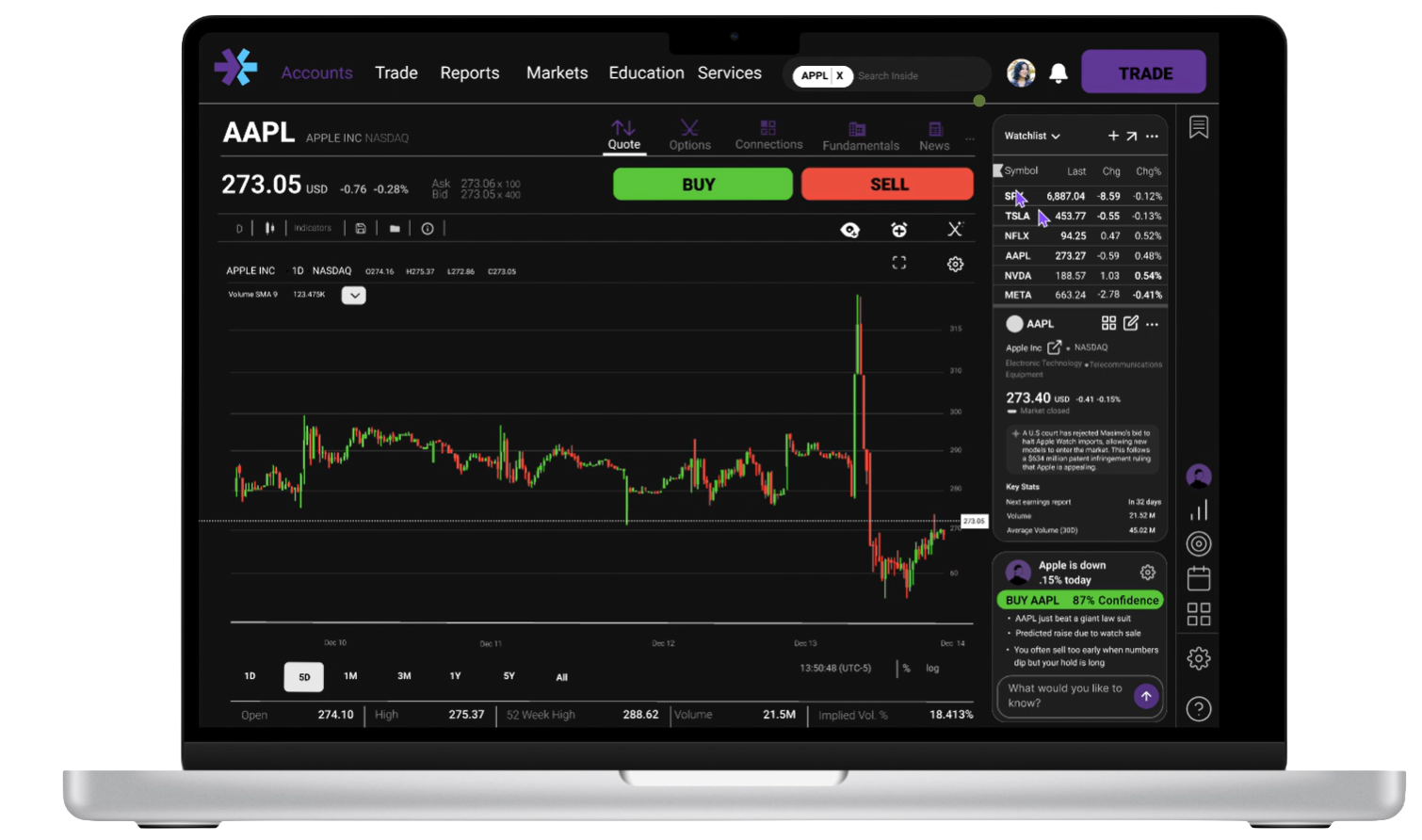

Hi-Fi Mockups

User Testing

Tested with a group of designers and engineers during a live working session.

Participants used Buddy across a real design critique session. Tasks included: capturing a decision, exporting a session summary, and interpreting a generated diagram.

64%Said the layout was easy to understand but felt extreme to be hardware for such a simple concept.

How do we bring this into a mobile or desktop version to save costs?

40%Said it allowed them to focus on the conversation better but now worried if it was generating correctly.

How can we monitor the images without having to backtrack?

30%Said it helped with miscommunication as they saw what they wanted and the other person.

Could this number get raised if we made the screen larger?

32%Of the time, PDF generation worked perfectly—but it often cut off words.

How do we test the backend more thoroughly?

Some thinkable quotes

“I stopped taking notes halfway through and just focused on the convo.”

“The diagram it made was close but not exactly what we were saying.”

“I'd want this on my phone so I could reference it throughout the day.”

Engineering

How the stack works end to end

Audio is captured and sent to the Whisper API for transcription. The transcript is passed to GPT-4 for interpretation, extracting the core idea from the conversation. Depending on the output type, either Mermaid.js renders a structured diagram or fal.ai generates an image. The backend runs on FastAPI, the frontend on Vite.

Diagrams created with Mermaid.js code and Python in a Figma plugin.

Diagrams made with Mermaid.js (rendered from Python)

system_prompt = f"""

You are a Visual Assistant. You generate Mermaid.js code OR Fal.ai image prompts.

CURRENT MODE: {DIAGRAM | SKETCH} (switch based on intent)

TASK:

1. ANALYZE USER INTENT:

- Chart, graph, flow, or timeline -> output mode: "DIAGRAM"

- Scene, photo, texture, or visual style -> output mode: "SKETCH"

- Referring to "it"/"the image"/"that" -> use CONTEXT HISTORY

2. FOR DIAGRAMS (Mermaid):

- Return valid Mermaid code only. No backticks.

- Support: graph TD, mindmap, pie, sequenceDiagram, xychart-beta, gantt.

3. FOR SKETCHES (Images):

- If refinement, keep core details and apply the change.

- set "is_refinement": true only if editing the previous image.

Return JSON ONLY:

{ "mode": "DIAGRAM" | "SKETCH", "prompt": "...", "is_refinement": true|false }

"""# Create a PDF summary to ensure universal accessibility

pdf_buffer = io.BytesIO()

c = canvas.Canvas(pdf_buffer, pagesize=letter)

text_obj = c.beginText(40, 750)

for line in summary_lines:

text_obj.textLine(line)

c.drawText(text_obj)

c.save()

# Package into a downloadable ZIP artifact

zip_file.writestr("session_summary.pdf", pdf_buffer.getvalue())

return StreamingResponse(

io.BytesIO(zip_buffer.read()),

media_type="application/zip",

headers={"Content-Disposition": "attachment; filename=session_export.zip"}

)LLM Persona

Had to clearly define the LLM's persona, ultimately assigning it the role of a Visual Assistant for the cleanest outputs.

Image Generation

A major technical hurdle was training the model to generate proper images without relying on explicit keywords.

Session Export

Engineered a session-commit function that dynamically zips all generated assets and transcripts into a universal PDF. Transformed a transient AI conversation into a professional leave-behind artifact.

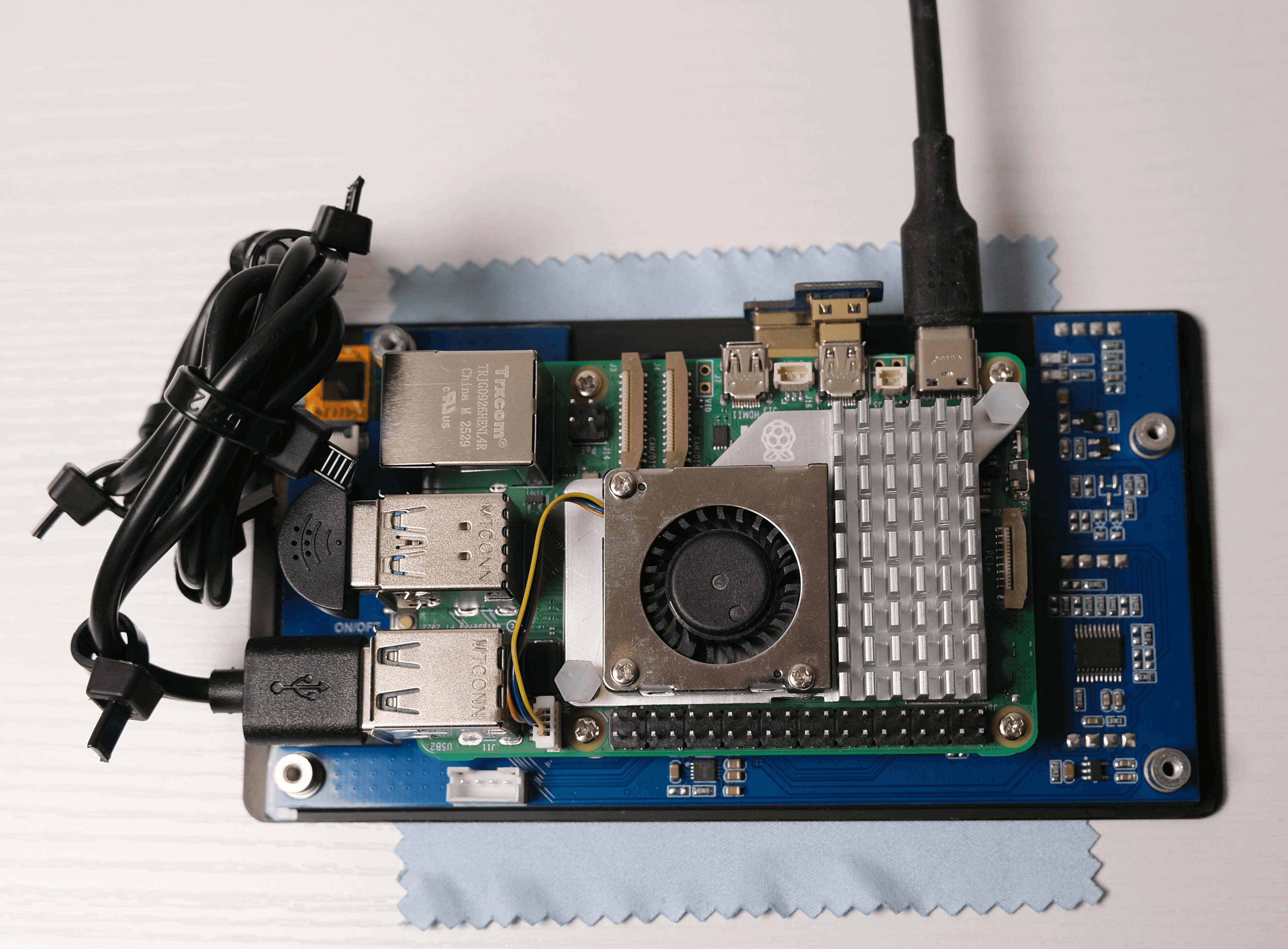

Hardware

Progress Photos

Building the physical enclosure on a Raspberry Pi, hardware constraints forced architectural clarity that cloud deployment never would have.

Final Product

The Finished Build

Impact

64%

found the layout intuitive on first use

40%

trusted capture enough to stop taking notes

30%

said visuals aligned the room faster than talk alone

Next Steps

- 01

Mobile or desktop form factor. Bring the same layout to phone or desktop software to save costs and right-size the concept away from dedicated hardware.

- 02

Live generation monitor. Surface diagram and image status in session so users trust output without leaving the conversation to verify.

- 03

Larger shared display. Test screen size and placement so both people can read generated visuals without squinting or crowding the device.

- 04

PDF export QA. Add automated export tests and layout checks so truncated words and broken pages are caught before users download.

What I Learned

- 1

Budget Is the Real Constraint

Budget is the most important constraint in any project. Things you want often have to be sacrificed just to get the product to market—and to earn enough to fund the next version.

- 2

Scale Before You Fabricate

Scalability should be thought about earlier. It was fun to get hardware running and learn shell scripting, but building an individual handheld for everyone at this size and scale is too expensive.

- 3

Less Is More

Complex problems often have simple solutions. It is easy to get caught up in complexity and overdesign—in the words of Mies, less is often more.

Considerations

Layout & Form Factor

64%

said the layout was easy to understand but felt extreme as hardware for such a simple concept—mobile or desktop could reduce cost and intimidation.

Ambient Capture

40%

said it let them focus on the conversation but worried whether output was generating correctly—monitoring images without backtracking is the next UX pass.

Visual Alignment

30%

said it reduced miscommunication because both parties saw the same artifact—a larger screen could raise alignment further.

Session Export

32%

of the time, PDF generation worked perfectly but often cut off words—backend layout QA and export tests need to run before every release.

Tradeoff made

The largest tradeoff was not including video. Image-generation APIs are priced per call, and sessions burned through credits quickly—adding live video would have overrun both budget and the two-week sprint. v1 stayed audio → diagram or still image → PDF export; video waits until usage and hardware can support it.

Every hardware decision bent toward keeping the bill of materials under $300. An external battery replaced an internal cell—lithium cost and assembly complexity were harder to justify than a sealed USB pack. The Raspberry Pi was a step down from the RAM target; more memory would have helped concurrent transcription and generation, but unit cost had to win for v1.

Bibliography

Links

- Arias, Ernesto G., and Gerhard Fischer. "Boundary Objects: Their Role in Articulating the Task at Hand and Making Information Relevant to It." International ICSC Symposium on Interactive and Collaborative Computing. University of Colorado Boulder, 2000. l3d.colorado.edu

- Brubaker, E. R., S. D. Sheppard, P. J. Hinds, and M. C. Yang. "Objects of Collaboration: Roles of Objects in Spanning Knowledge Boundaries in a Design Company." 34th International Conference on Design Theory and Methodology. MIT, 2022. dspace.mit.edu

- Huang, Y.-H. "Understanding the Collaboration Difficulties Between UX Designers and Developers in Agile Environments." Masters thesis, Purdue University, 2018. Documents wasted time on rework, revisions, and misaligned handoffs; aligns with ~7.5 hrs/week lost to poor communication (Grammarly / Harris Poll, 2023). docs.lib.purdue.edu

- Forrester Consulting. "The Total Economic Impact of Figma." Commissioned by Figma, 2024. Composite organization: 35% productivity gain in development; 60% faster ideation and creation workflows. figma.com

- Grammarly and The Harris Poll. "State of Business Communication." 2023. Knowledge workers lose ~7.5 hours/week to poor communication; firms with 500 employees lose $6.25M/year resolving communication issues. At 56% less meeting waste, equivalent savings ≈ $3.5M/year for the same org size. grammarly.com

- Fellow.app. "The State of Meetings Report 2024." Survey of how teams run meetings, time spent, and productivity impact. fellow.ai

more in product-design

view all →